We are now in an era where large foundation models are transforming fields like computer vision, natural language processing, and, more recently, time-series forecasting.

These models are reshaping time-series forecasting by enabling zero-shot forecasting, allowing predictions on new, unseen data without retraining for each dataset. This breakthrough significantly cuts development time and costs, streamlining the process of creating and fine-tuning models for different tasks.

Timeline of Foundational Forecasting Models

In October 2023, TimeGPT-1, designed to generalize across diverse time-series datasets without requiring specific training for each dataset, was published as one of the first foundation forecasting models. Unlike traditional forecasting methods, foundation forecasting models leverage vast amounts of pre-training data to perform zero-shot forecasting. This breakthrough allows businesses to avoid the lengthy and costly process of training and tuning models for specific tasks, offering a highly adaptable solution for industries dealing with dynamic and evolving data.

Multiple series forecasting with TimeGPT-1 (Credit: TimeGPT-1 paper)

Recent Advances

Then, in February 2024, Lag-Llama was released. It specializes in long-range forecasting by focusing on lagged dependencies, which are temporal correlations between past values and future outcomes in a time series. Lagged dependencies are especially important in domains like finance and energy, where current trends are often heavily influenced by past events over extended periods. By efficiently capturing these dependencies, Lag-Llama improves forecasting accuracy in scenarios where longer time horizons are critical.

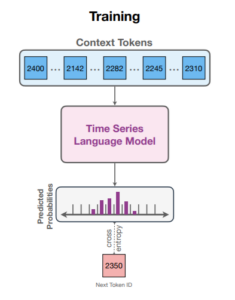

In March 2024, Chronos, a simple yet highly effective framework for pre-trained probabilistic time-series models, was introduced. Chronos tokenizes time series values—converting continuous numerical data into discrete categories—through scaling and quantization. This allows it to apply transformer-based language models, typically used for text generation, to time series data. Transformers excel at identifying patterns in sequences, and by treating time series as a sequence of tokens, Chronos enables these models to predict future values effectively.

Chronos training (Image credit: Chronos: Learning the Language of Time Series)

In May 2024, Salesforce introduced Moirai, an open-source foundation forecasting model designed to support probabilistic zero-shot forecasting and handle exogenous features. Moirai tackles challenges in time series forecasting, such as cross-frequency learning, accommodating multiple variates, and managing varying distributional properties. Built on the Masked Encoder-based Universal Time Series Forecasting Transformer (MOIRAI) architecture, it leverages the Large-Scale Open Time Series Archive (LOTSA), which includes more than 27 billion observations across nine domains. With techniques like Any-Variate Attention and flexible parametric distributions, Moirai delivers scalable, zero-shot forecasting on diverse datasets without requiring task-specific retraining, marking a significant step toward universal time series forecasting.

IBM’s Tiny Time Mixers (TTM), released in June 2024, offer a lightweight alternative to traditional time series foundation models. Instead of using the attention mechanism of transformers, TTM is an MLP-based model that relies on fully connected neural networks. Innovations like adaptive patching and resolution prefix tuning allow TTM to generalize effectively across diverse datasets while handling multivariate forecasting and exogenous variables. Its efficiency makes it ideal for low-latency environments with limited computational resources.

About the author: Anais Dotis-Georgiou is a Developer Advocate for InfluxData with a passion for making data beautiful with the use of Data Analytics, AI, and Machine Learning. She takes the data that she collects, does a mix of research, exploration, and engineering to translate the data into something of function, value, and beauty. When she is not behind a screen, you can find her outside drawing, stretching, boarding, or chasing after a soccer ball.

Conclusion

These foundation models are pushing the boundaries of time series forecasting, and the future holds even more exciting innovations. By combining time series models with language models, we can unlock even better results. The possibilities are endless, from predicting ride demand in real-time to predicting health outcomes based on patient monitoring data. The next frontier is combining traditional sensor data with unstructured text for even better results.